AR Sandbox

OVERVIEW

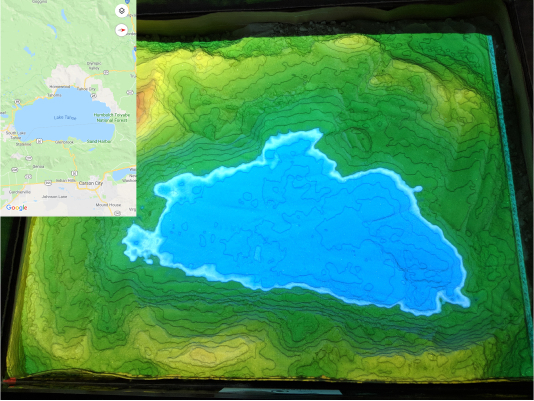

Among one of five sandbox exhibits in British Columbia, I had the opportunity to explore new software concepts for a co-op term under my university’s co-op program. The company utilized this opportunity as an educational tool that would be brought to schools for learning about their surrounding environment.

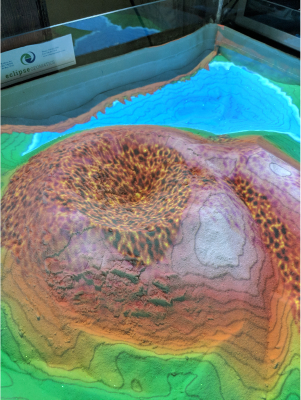

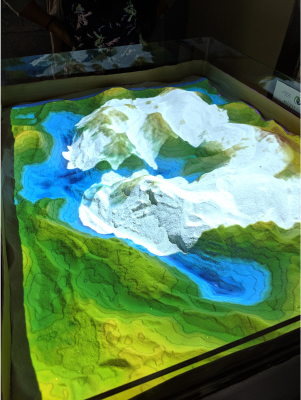

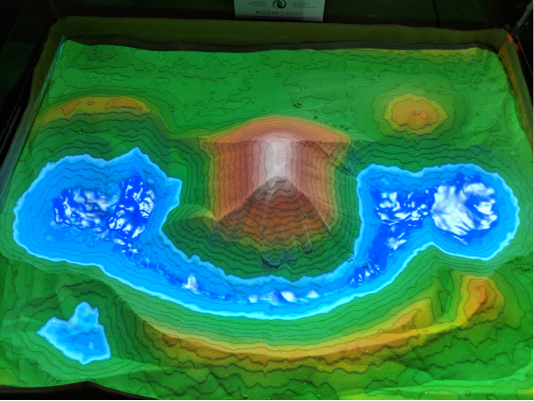

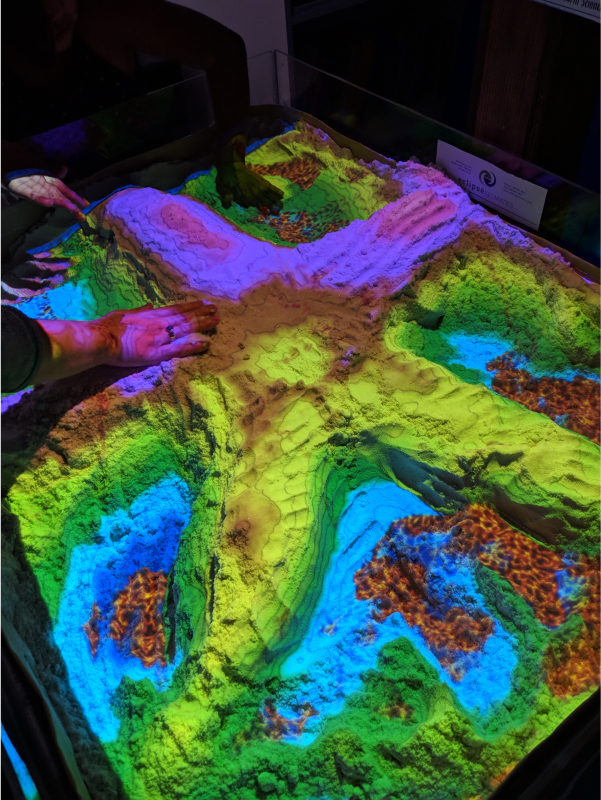

The sandbox is a 30″ x 40″ table with sand and borders around to keep the sand within the bin. The sand in particular is Santastik sand, moldable and safe for sculpting. Above the table lies a short throw projector and a Kinect device. The two devices work together with an open-source software to detect and project any displacements of sand and readjust a colour corresponding depth.

ROLE

Sandbox Exhibit Facilitator

UX

4 month co-op term

BACKGROUND

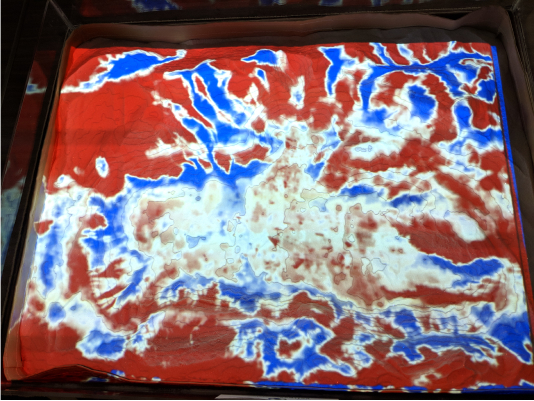

The early inception of the sandbox could detect changes of depth within a reasonable response time, but wasn’t near-instantaneous to meet standards. It was common to get inaccurate readings and shifts in the color palette.

GOAL

My co-op term involved simplifying the operations and be in charge of setup and takedown of the sandbox when taken to venues. Secondarily, explore post-developmental features to further enhance the sandbox capabilities.